Relari launches: testing and simulation stack for GenAI systems

"Harden your GenAI Systems with Synthetic Data"

Open-source evaluation framework and synthetic data generation pipeline. Relari helps AI teams simulate, test, and validate complex AI applications throughout the development lifecycle.

Founded by Yi Zhang & Pasquale Antonante

TL;DR

Relari is an open-source platform to simulate, test, and validate complex Generative AI (GenAI) applications. With 30+ open-source metrics, synthetic test-set generators, and online monitoring tools, they help AI app developers achieve a high degree of reliability in mission-critical use cases such as compliance copilot, enterprise search, financial assistance, etc.

💡 Our Inspiration: Autonomous Vehicles

Just like autonomous vehicles promise to change how we move, GenAI applications promise to revolutionize the way we work. However, ensuring these systems are safe and reliable requires a shift in the development process. Autonomous vehicles need to drive billions of miles to ensure that they are safer than human drivers. This would take decades, so the industry relies on simulation and synthetic data to efficiently test and validate each iteration of the self-driving software stack.

🔴 Problem: GenAI Apps are Unreliable

LLM-based applications can be inconsistent and unreliable. This blocks GenAI’s adoption in mission-critical workflows and hurts user confidence and retention once deployed to production. Good testing infrastructure is paramount to achieving the quality users demand, but GenAI app developers across startups and enterprises struggle to define the right set of tests and quality-control standards for deployment.

The main challenges the AI teams face are:

- Complex Pipelines: GenAI pipelines are getting increasingly more complex and it is often difficult to pinpoint where problems originate.

- Gap from Evaluation to Reality: There is a huge gap between the metrics used in offline evaluation and user feedback, leading to distrust in offline evaluation results.

- Lack of Relevant Datasets: Public datasets are overfitted by models and often not relevant to specific applications. However, manual curation of custom datasets is extremely time-consuming and costly.

🚀 Solution: Harden AI Systems with Simulation

Relari offers a complete testing and simulation stack for GenAI pipelines designed to directly address the problems above. Relari allows you to:

- Pinpoint problem root causes with modular evaluation: Define your pipeline and flexibly orchestrate modular tests to quickly analyze performance issues. Their open source framework offers 30+ metrics covering text generation, code generation, retrieval, agents, and classification with more coming soon.

- Simulate user behavior with close-to-human evaluators: Leverage user feedback to train custom evaluators that are 90%+ aligned with human evaluators (backed by Relari's research). Introduce a feedback loop connecting your production system and the development process.

- Accelerate development with synthetic data: Generate large-scale synthetic datasets tailored to your use case and stress test your AI pipeline. Ensure coverage of all the corner cases before shipping to users.

Learn More

🌐 Visit relari.ai to learn more

📅 Book a demo with them!

👉 Introduce them to AI / ML / Data Science teams building mission-critical GenAI applications

⭐ Star Relari on Github (link)

👥 Follow Relari on LinkedIn, GitHub, & X

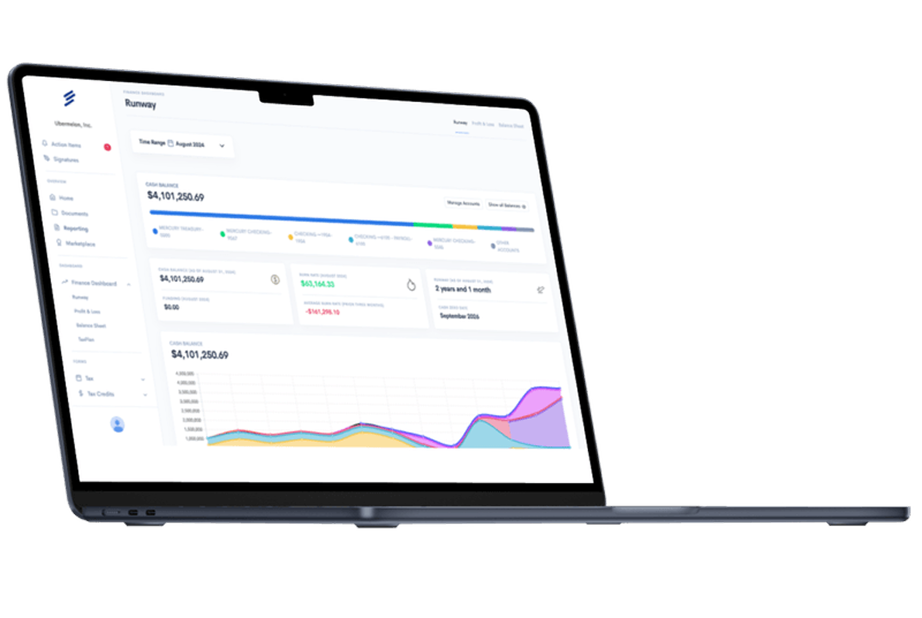

Simplify Startup Finances Today

Take the stress out of bookkeeping, taxes, and tax credits with Fondo’s all-in-one accounting platform built for startups. Start saving time and money with our expert-backed solutions.

Get Started

.png)