Discover how Kobalt Labs' API ensures secure and compliant use of LLMs on sensitive data. Safeguard your information with modern security solutions.

Kobalt Labs launched on Y Combinator's "Launch YC" recently!

"Modern security for generative AI."

Kobalt Labs enables companies to securely use GPT or other LLMs without being blocked by data privacy issues. Compliantly anonymize PII/PHI, continuously check for sensitive data leakage, and protect against prompt injection with one API.

Founded by Kalyani Ramadurgam & Ashi Agrawal. The founders recognized the potential risks GPT and LLMs pose with private data and tasks. After seeing companies struggle with cloud-based models due to security and data privacy concerns, the duo built a security layer to unblock deep model adoption.

HOW IT WORKS

It's risky to let GPT access private data or take action (DB write, API calls, chat with users, etc). Kobalt Labs enables companies to securely use GPT or other LLMs without being blocked by data privacy issues.

❌ The Problem

1️⃣ Data privacy is one of the most significant blockers to deep LLM adoption. The Kobalt Labs team has worked at companies that struggle to use LLMs due to security concerns -- healthcare companies are especially vulnerable.

2️⃣ Companies need a way to use cloud-based models without putting their PII, PHI, MNPI, or any other private information at risk of exposure. BAAs don’t actually enforce security at the API layer.

3️⃣ Companies that have sensitive data are acutely at risk when using an LLM. Prompt injection, malicious subversive inputs, and data leakage are just the tip of the iceberg when it comes to everything that will go wrong as LLM usage becomes more sophisticated.

✨ What does Kobalt Labs do?

Kobalt Lab's model-agnostic API:

🔵 Anonymizes and replaces PII and other sensitive data – including custom entity types – from structured and unstructured input

🔵 Can also replace PII with synthetic “twin” data that ensures consistent behavior with the original content

🔵 Continuously monitors model output for potential sensitive data leakage

🔵 Flags user inputs for prompt injection or malicious activity

🔵 Aligns model usage with compliance frameworks and data privacy standards

👉 What makes Kobalt Labs different?

🔹 The company's sole focus is optimizing security and data privacy while minimizing latency. All traffic is encrypted, they don’t hold any user inputs, and they score highly on prompt protection and PII detection benchmarks.

🔹 On the backend, Kobalt Labs is using multiple models of varying performance and speeds, and filtering inputs through a model cascade to make everything as fast as possible.

🔹 It's compatible with OpenAI, Anthropic, and more, including self-hosted models.

"LLMs, made private and secure"

LEARN MORE

🌐 Visit www.kobaltlabs.com to learn more!

🤝 Do you work with lots of sensitive data or know someone who does? Reach out to the Kobalt Labs team!

🐦 🔗 Follow Kobalt Labs on Twitter & LinkedIn

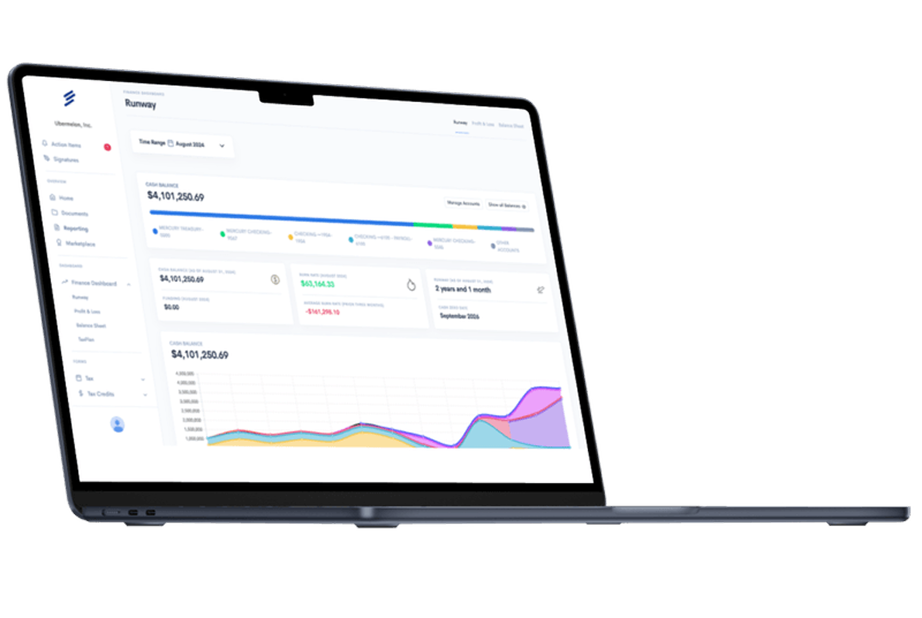

Simplify Startup Finances Today

Take the stress out of bookkeeping, taxes, and tax credits with Fondo’s all-in-one accounting platform built for startups. Start saving time and money with our expert-backed solutions.

Get Started

.png)