Discover why AI will not destroy us. Explore the limitations of current AI systems and the need for intuitive understanding.

This video is from a discussion on AI systems, specifically large language models like ChatGPT, hosted by Brian Greene. The speakers included Sébastien Bubeck, a research manager at Microsoft focused on large language models, Tristan Harris, an expert on the societal impacts of technology, and Yann LeCun, a pioneer in AI and head of AI research at Facebook. The discussion centered around understanding and evaluating the current capabilities of systems like ChatGPT and where AI technology needs to go in the future.

How Large Language Models Work

Yann LeCun explained how large language models like ChatGPT are trained - they are shown huge amounts of text data and learn to predict the next word in a sequence. This allows them to generate fluent text by predicting the most likely next words. However, a limitation is that they can sometimes hallucinate incorrect information since they don't truly understand the meaning behind the words.

Limitations of Current Systems

The speakers discussed various limitations of current AI systems in terms of reasoning, planning and learning real-world knowledge. Tristan Harris argued that while they can fluently manipulate language, they lack true intelligence or understanding. Yann LeCun used examples like intuitive physics to demonstrate how even a house cat has more common sense than today's AI systems.

A Vision for Truly Intelligent AI

Yann LeCun shared his vision for creating AI that can exhibit the flexible intelligence seen in humans and animals. This includes developing systems that can learn intuitive physics and how the world works simply by observing video input, without needing explicit human labeling or supervision. The key is to focus less on just language tasks and more on learning interactive world models.

Assessing Reasoning and Learning in Large Language Models

Sébastien Bubeck shared examples of being impressed by the reasoning and learning capabilities exhibited by systems like GPT-4, including writing a poem that gives a mathematical proof and drawing a picture of a unicorn in an obscure programming language. However, he agreed fundamental abilities like planning are still lacking, and we have a long way to go to achieve artificial general intelligence.

Conclusion

In conclusion, while recent progress in systems like ChatGPT is exciting, current AI still lacks the flexibility, common sense and learning abilities that we take for granted in humans. Going forward there needs to be more focus on designing systems that can learn intuitive understanding of the world around us. As Yann LeCun summarized, we still don't know how to build systems as smart as a cat!

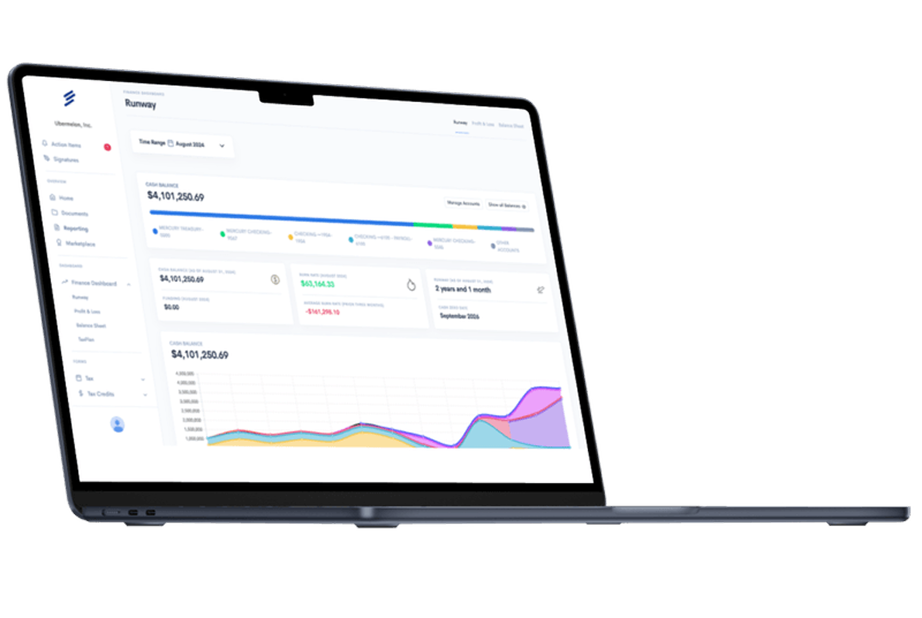

Simplify Startup Finances Today

Take the stress out of bookkeeping, taxes, and tax credits with Fondo’s all-in-one accounting platform built for startups. Start saving time and money with our expert-backed solutions.

Get Started

.png)